Óscar Martín is an experimental programmer and musician. He bases his work on the deconstruction of field recordings, no-conventional sinthesis and the creative use of technology errors. Luthier-digital with a “pure data” environment, which he uses to develop his own no conventional tools for processing and real time algorithmic generative composition. He can be placed somewhere between Computer Music, the Aesthetics of Error, and generative Noise. He seeks the creation of virtual sound universes, imaginary soundscapes that encourage active listening and a different sensibility toward the perception of sound phenomena.

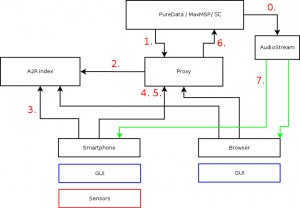

The workshop developed by Óscar Martín at Hangar is planned as an experimental theoretical and hands-on laboratory on the concepts of emergent systems, stochastic and chaotic generative music under installative formats. The participants will work based on the contents and the technological development of the installation RdES ( http://noconventions.mobi/noish/hotglue/?RdEs_eng/) and prepare a performance that will be presented to the public during the last day of the workshop.

RdEs is an installation that explores the sonic and compositional possibilities of the concepts of complexity and emerging systems, using a network of “modules-particles” that interact with each other and the environment. Each “module-particle” follows simple individual rules, but is able to generate more complex and sophisticated patterns when combined with the others.